Introduction

At the Sundance Film Festival in 2012, the journalist and filmmaker Nonny de la Peña debuted what is now recognized as the first documentary produced in virtual reality.

Presented as part of the New Frontiers section, Hunger in Los Angeles immersed viewers in the reality of hunger in America. Created with the Unity video game platform, the piece was experienced through a prototype headset built by an intern and lab technician named Palmer Luckey at the University of Southern California, where de la Peña was overseeing the efforts as a research fellow. The headset allowed visitors to walk around inside a food bank in downtown Los Angeles and witness a scene from 2009 in which a man collapsed in diabetic shock while waiting in line.

Nonny de la Peña

Capable of observing the characters from multiple angles, viewers found themselves “present” within the experience. “People broke down in tears as they handed back the goggles,” says de la Peña. “That’s when I knew this tool could let viewers experience and understand an event in a completely new way.”

Hunger in Los Angeles pioneered a new approach to immersive storytelling in journalism. Nonny de la Peña went on to establish the virtual reality studio Emblematic Group and Luckey continued to develop his goggles, eventually selling his company to Facebook under the name Oculus Rift.

Meanwhile, Raney Aronson-Rath, then-Deputy Executive Producer of the PBS investigative documentary series FRONTLINE, was exploring emerging media and new storytelling techniques, challenging the series to push beyond the long-form, linear films for which it is known.

Raney Aronson-Rath

In 2014, as a fellow at MIT’s Open Doc Lab, Aronson-Rath began to see how VR might bring FRONTLINE’s journalism to life in new ways. While recognizing the promise of VR as a journalistic tool, she also knew it would bring its own set of ethical and editorial questions and potential challenges to the series’ established standards and guidelines: “The question became, how do we make certain FRONTLINE’s VR efforts embody our brand of tough but fair reporting and filmmaking?,” Aronson-Rath said.

In 2015, the Knight Foundation offered Aronson-Rath, now Executive Producer of FRONTLINE, the opportunity to collaborate with Emblematic Group and jointly explore these challenges by producing a series of VR projects and developing a set of best practices and guidelines for other news organizations working in the medium.

For the last three years, journalists, producers, designers and engineers from FRONTLINE and Emblematic Group have worked together to produce two VR experiences that each deploy the power of fully immersive, room-scale VR in the service of deeply reported narrative journalism. As part of the initiative, The Media Impact Project, a research organization at USC’s Annenberg Norman Lear Center which studies the impact of media on society, conducted testing exploring how the new technology being used by FRONTLINE and Emblematic engages and informs audiences.

What follows are the lessons gleaned throughout this collaborative effort, shared to foster future opportunities for meaningful immersive journalism, and to help establish standards to guide other journalists and media organizations participating in this developing field.

State of the Technology

Terminology, Tools & Techniques

Virtual reality is one of the most rapidly growing media of our time. Advances are announced on a near constant basis. New ways of capturing data, of allowing viewers to interact with content, and of distributing VR present endless opportunities in this nascent space. Major news organizations working in VR include The New York Times, which distributed a million Google Cardboard headsets with its Sunday paper and has made its VR app available on smartphones, and Time Inc., whose LIFE VR app covers topics including the attack on Pearl Harbor and an ascent of Mount Everest.

But the very factors that make VR so powerful and innovative—the head-spinning rate of change and the endless new possibilities for expression—also make it dauntingly difficult to explain, let alone manage. The range of experiences available to consumers is vast, from videos that simply offer a spherical field of view to interactive environments that deploy the artistry and techniques of video games and animated films.

Producing VR is an even more complex process. Recommendations for the best 360° camera rig can become outdated in a matter of months, making hardware decisions difficult. Volumetric video recording can sometimes replace an expensive motion capture session, but it may require an experienced producer to make the call. The kind of headset that the viewer will use determines the overall parameters of any given piece, meaning even the earliest ideation session must be informed by an awareness of the distribution plan and the capabilities of the various platforms.

Part of the challenge is that even the terminology is in flux and still being defined. The term “virtual reality” is often used to refer to both 360° videos and “volumetric” VR pieces, which are in turn referred to by some as “room-scale” or “walk-around” or “true” VR. There are also multiple terms for production techniques, such as “volumetric video capture” versus “videogrammetry.”

As a modest first step toward making things a little more understandable, we have taken a stab at defining some key terms. Although we may be a long way off from a uniform, integrated production and distribution system, we can at least begin to craft a shared language that lets us agree on what it is that we are trying to achieve.

Below is a brief glossary of key terms with definitions. Each comes with the caveat that these definitions are porous, subject to slightly varying interpretations and also liable to be replaced or left behind, especially as the technology advances and new forms of hardware and software become available.

Presence

This is the single defining characteristic of virtual reality; the way in which, thanks to a certain combination of sensory input, your mind can trick your body into feeling as though it is somewhere else. Though “presence” has already become a clichéd term, the phenomenon is not a fad, a novelty or a gimmick. It is an established field of neuroscience, with both its positive and negative potential debated by academics around the world. The various factors that affect the level of presence achieved—from visual detail to frame rate to the quality of a character’s body language—have been tracked in this study. Others have written about some of the warnings such as this one on the dangers of a medium that can tap so directly into our central nervous system.

Presence: A viewer experiencing Hunger in Los Angeles at the 2012 Sundance Film Festival gets down on his knees as he approaches a seizure victim.

Empathy

This is another well-documented phenomenon (and much-used term). Feeling present in an experience generates empathy on the part of the viewer toward the characters depicted. A number of clinical studies, as well as a large body of anecdotal evidence, shows that viewers have a stronger emotional response to a scene witnessed in VR than they do to one watched on a 2D screen.

360° Video (or Cinematic VR)

Video that captures a spherical field of vision, allowing the viewer to look in any direction as if they were at the center of a globe. Although this creates a certain degree of immersion, the viewer is tethered in that central spot; they cannot alter their position within the environment. 360° video is the most accessible variant of VR. In its simplest form, it is viewable using only a mobile phone: By either swiping on the image to change orientation or waving the phone around in the air (“magic window”), the viewer can take in the entire field of view. Greater levels of presence for 360° video can be achieved by using a headset—either a standalone device with a built-in screen, or a peripheral into which the user can insert their own phone.

Volumetric VR (aka Room-Scale or Walk-Around VR)

Any experience in which the viewer can move freely inside the environment, examining the scene from different viewpoints and observing characters from different angles, can fall under this label. It requires a defined physical space in which the viewer can roam (hence “room scale”) as well as external sensors that track the position of the headset, allowing it to adjust what the viewer sees in real time based on their position. A newer generation of headsets features outward-facing sensors mounted on the primary device, thus obviating the need for separate external sensors.

Traditionally, the only means of creating volumetric environments has been via video game platforms such as Unity and Unreal. Since these have been built using computer graphics (CG), they have not attained the same level of realism as 360° video. Hence the trade-off: visual verisimilitude versus the ability to move. However, new techniques such as photogrammetry and volumetric video now promise the best of both worlds: fully 3D environments and characters that achieve the same level of visual detail as traditional still images and video footage.

Interior and exterior locations from Kiya, an Emblematic Group/New York Times production about a fatal domestic violence incident in North Charleston, S.C. At left are photographs; at right are the CG recreations

Spatial Narrative

Journalism often aims to communicate, to its best ability, what happened and when. Volumetric VR is uniquely able to convey these parameters—the distance between a shooter and his victim, for example, or the exact sight lines afforded by a particular vantage point.

Above: A selection of still images used to construct the volumetric environment for Emblematic Group’s Use of Force. Below: A CG model of a border guard, placed inside the 3D scene.

Photogrammetry

A means of capturing 3D spaces in high-resolution photographic detail. The photographer takes multiple images from multiple points within the environment; a postproduction process triangulates each of those images relative to each other, creating a geometrically precise “mesh” onto which the images are mapped. The result is a virtual environment in which the viewer can walk around, captured at a level of detail that rivals still photography.

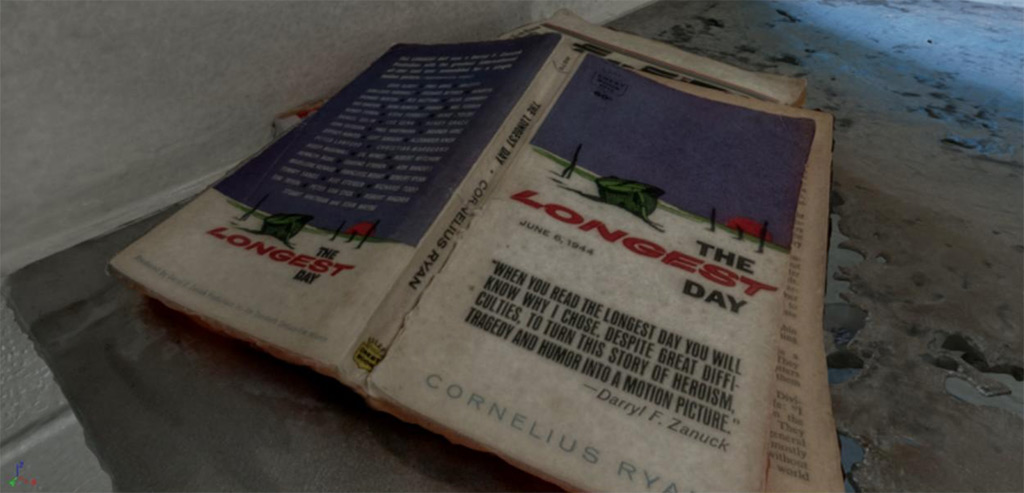

A solitary confinement cell in Maine State Prison captured using photogrammetry for After Solitary, the first collaboration between FRONTLINE and Emblematic Group

Detail from After Solitary

Volumetric Video Capture (aka Videogrammetry)

A technique for recording people “in the round,” volumetic video or videogrammetry uses an array of more than 50 video cameras, usually in a dedicated lab. The result is a near-video-quality 3D figure that can be viewed from any angle, even as it moves. The potential for placing these figures into a volumetric environment opens up huge possibilities for the level of realism achievable in volumetric VR.

A volumetric video capture session at the 8i studio in Los Angeles. More than 50 cameras capture the subject from all angles before the video is stitched together.

Nausea

One of the most common misconceptions about VR is that the medium itself causes motion sickness. This is generally not the case. While a small percentage of viewers do have a mild reaction to any kind of immersion in VR, the vast majority of cases are caused by a simple mistake on the part of the director: the use of extended tracking shots. If a viewer’s eyes tell them they are moving while their inner ear registers that they are standing (or sitting) stock still, the resulting disconnect will usually—though not always—result in a sensation of nausea.

Embodiment

The level of immersion achieved in volumetric VR, where the viewer’s physical movements determine their position within the experience. One of the most powerful examples of the phenomenon is the way in which placing a viewer close to a precipice generates an intense feeling of vertigo. Many viewers have to be coaxed to step “off the ledge,” even though they know that there is no actual drop in front of them.

Gamification

The practice of using elements and functionality commonly used in video games in order to illustrate and illuminate “real-world” situations and events, often making use of the hand controllers that are included with volumetric headsets such as the HTC Vive.

Dimensionalized

Dimensionalized assets were originally captured flat and through interpretation converted to 3D objects. In Greenland Melting, the final scene was filmed with 360º video and not captured using photogrammetry or other 3D capture methods. Since it was necessary for users to be able to walk in the scene, 3D information had to be interpreted and created using the flat information available. First, a high-resolution still was rendered from the 360º stitching software and used as a texture for an artist to add 3D mesh information to ZBrush software. Combining this 3D mesh and high-resolution texture created the final result of a volumetric rocky surface with real captured texture from the surface of Greenland.

Parallax

Parallax is a key trait of volumetric VR and provides a sense of dimension. In the real world, when people move, they see different angles of an object. In walk-around VR, the user sees different angles of the same object that may be obfuscated from one singular point of view. For example, when the user is in the research station of the boat, they are able to see underneath the desk. In 360º video experiences, the user is fixed in one point of view with a panoramic video around them and no effect of parallax since they cannot move. Dimensionalization of 360º assets described above is important to continue the effect of parallax.

Opportunities & Challenges

Case Studies from the Field

In 2016, FRONTLINE and Emblematic Group set out to explore the best ways to tell deeply reported, narrative stories deploying the immersive power of VR. Each collaboration represented a progression in both scope and technique. After Solitary was a relatively passive experience with a single character and fewer environments. Greenland Melting went further by enabling teleportation and incorporating multiple characters and environments.

At every stage of the process, meticulous attention was paid to the ethical and editorial challenges arising from these projects. The desire to create a compelling experience had to be balanced with the essential need to convey the story accurately, fairly, and with journalistic integrity. Since VR grants viewers the opportunity to experience a story firsthand, there was a critical need for transparency about the recreated nature of the various scenes presented, a consideration that factored heavily into many creative decisions.

Below is a detailed look at the various challenges that arose throughout the production of each project, as well how each was solved.

As FRONTLINE and Emblematic began brainstorming suitable stories to explore in this medium, a piece about solitary confinement immediately became a strong candidate.

FRONTLINE producers Dan Edge and Lauren Mucciolo had spent years documenting the topic, with unprecedented access to the Maine State Prison. Their films were groundbreaking and thorough, but both were aware of one major limitation: There was simply no way to capture the visceral experience of having been there in person.

With this in mind, the team set out to create an immersive piece on solitary confinement. A former inmate would be interviewed using videogrammetry, and the resulting 3D, hologram-like figure would be inserted into an ultra-realistic, 3D solitary confinement cell, captured via photogrammetry. This would allow viewers to feel as though they were actually in the cell with the subject, hearing the story firsthand. While VR provided a unique means of documenting and illuminating this controversial practice, the production process raised a number of technological, editorial and ethical challenges and questions.

Filming Interviews

To be interviewed using videogrammetry, the interviewee needed to travel to Los Angeles to be filmed in a specialized green-screen studio outfitted with more than 40 cameras. This posed several challenges. Many inmates who had recently served time in solitary were on probation and subject to travel restrictions, and some of the guards could not take time off to fly across the country.

For the interview to be filmed in an ethical manner, the subject needed to be able to travel, to be made aware of the technologies in use, and to be comfortable sharing his experience in a unique way that would place him in a virtual solitary confinement cell.

With those parameters in mind, producer Lauren Mucciolo spent months speaking with dozens of former inmates, ultimately landing on Kenny Moore, a recently released man who appeared to be well-suited for the project. Moore was not bound by any travel restrictions and was an avid video game user, already familiar with the technology the team would be using to tell the story. Moore expressed particular interest in sharing his experiences in VR, stating that he believed doing so could help better educate others. Still, because Moore had experienced paranoia and mental instability during and after his time in solitary, the team flew his girlfriend—his primary emotional support—to L.A. to accompany him to the interview.

Once Moore agreed to participate as the central character, the team faced another ethical decision: What should he wear during his interview? Some thought it would create a more immersive experience for Moore to be in a jumpsuit like the one he’d worn in prison; others believed that, because the hologram of Moore would appear within his original cell, it was important for viewers to understand that Moore was no longer in solitary confinement and was not actually in the cell during the interview. There was also a concern that donning the jumpsuit could be a psychological trigger for Moore. In an effort to be transparent, accurate and fair to Moore, the team asked him to wear his everyday clothes for his interview.

The team also was careful to conduct and edit Moore’s interview in a way that made clear he was not presently in the cell, but was speaking retrospectively about his experiences.

Building the Story Environments

The team wanted the 3D story environments—the cell, Moore’s current bedroom, and other spaces viewers would explore—to be as realistic and accurate as possible. Thanks to FRONTLINE’s unprecedented access to Maine State Prison, the team was able to capture one of the cells in which Moore had served time using photogrammetry. Producers also found some of the objects that had been in Moore’s cell—for example, a standard-issue tube of toothpaste and a pair of sandals—and placed them back in the space for filming. The photogrammetry was carried out by the pioneering German company Realities.io.

To communicate the sense of confinement in the cell, the team also took advantage of the technical limitations of the HTC Vive headset, which allows viewers to move around only within a defined 10’ by 10’ space.

After viewing After Solitary, many viewers remarked that they felt as though they had visited an actual solitary cell. Many were observed looking out the window, examining the metal toilet, trying to see their own reflections in the mirror, and even attempting to sit on the cot.

Animations and Recreations

Computer-generated (CG) animations were paired with Moore’s testimony to bring different aspects of his experience to life. For example, to demonstrate the technique described by Moore in his interview as “fishing”—in which inmates use a paper kite to pass small objects back and forth beneath cell doors—the team relied on 2D footage and audio filmed for the original FRONTLINE documentary, along with Moore’s description, to replicate the look, speed and sound of the kite as it moves in and out of the room.

While reporting the original documentary, producers found that many solitary inmates engage in self-harming behaviors, something that researchers point to as evidence of the psychological impact of confinement. This was a key part of Moore’s story—he had indeed engaged in these behaviors himself—but the original footage was graphic, and the team decided to find a different way to represent these behaviors in this more immersive medium. Ultimately, as Moore describes cutting himself and using his blood to write messages on the wall, animated song lyrics in a specialized red font slowly appear beside him on the recreated surface. The images are clearly animated, and thus less graphic, and cannot be mistaken for real.

Toward the end of the story, Moore describes how his mental state improved as his sentence neared its end and he began taking part in rehabilitation courses offered by the prison. To visually illustrate these elements of his story, the team used post-production lighting techniques to allow his cell to go from dark to light as he describes his improvement. Photographs of Moore’s family appear on his cell wall as he speaks about the motivation he experienced when thinking of them. While the light did not physically change in the solitary cell, and the family photos were not actually on Moore’s wall, the light effects and photos were designed to look like animations, allowing them to be used narratively without leaving the viewer with an inaccurate impression of their authenticity.

In addition to these effects, the team deployed sound cues to guide the viewer’s attention toward the various animations used throughout the piece—a subtle and effective way of addressing the major challenge of a frameless medium, in which viewers can easily miss key events.

Watch After Solitary in 360° video:

Stories of climate change often feel distant and intangible. They focus on faraway places and events that (in some cases) won’t be felt directly for centuries to come. As a result, the team believed an exploration of the topic using the VR medium could be uniquely fruitful.

When FRONTLINE producer Catherine Upin learned that a team from NASA was traveling to Greenland to conduct groundbreaking research on the country’s melting ice sheets, the team decided it was an ideal project for the second piece and set out to use cutting-edge VR technology to bring the story to life in a visceral and immediate manner. Knowing the story needed to be rooted in some important and yet complex science concepts, FRONTLINE and Emblematic Group partnered with PBS’ award-winning science television series NOVA to help tell the story and vet the science.

The resulting project, Greenland Melting, utilized various emerging technologies and brought many new challenges to the fore. What follows is a discussion of the various obstacles faced and solutions reached throughout production.

Building the Story Environments

FRONTLINE and Emblematic learned of NASA’s trip to Greenland with just a week’s notice. It was the researchers’ last expedition of the year, as Greenland would be inaccessible for the rest of the long winter season.

The team knew that traveling to the remote glaciers of Greenland would be difficult under any circumstance, but doing so on short notice, and with an extraordinary amount of specialized equipment in tow, proved exceptionally challenging. While the project was intended to be shot primarily using photogrammetry, so that viewers could walk around and explore the landscapes, there were a number of challenges, including complicated weather conditions and severe time constraints, , that prohibited the team from capturing every environment in this way.

As a result, the nine different locations in Greenland Melting were created using a mix of formats and techniques, from photogrammetry to dimensionalized 360° video to high-fidelity CG model recreations. Two additional partners, Realtra and xRez Studios, worked alongside Emblematic engineers on the photogrammetry capture and modeling. In addition, xRez worked closely with Emblematic to employ NASA bathymetry data to build the underwater visualization.

The aerial glacier photogrammetry proved both technically difficult and costly. Shooting the scene required flying by helicopter for more than two hours across one of the most remote and unpredictable weather areas in the world. When the team flew across the fjord to capture the photogrammetry of the glacier from above, the pilot informed the production crew that if they had to ditch the helicopter, they would not survive in the ice-filled water. Despite the various challenges, the team was able to document the glacier successfully.

Because of time constraints, the production team was unable to capture NASA’s research vessel using photogrammetry while in Greenland, but they felt it was essential to find a way to do so after the fact. Photogrammetry would allow viewers to virtually walk on the ship’s deck and explore the cabins where key experiments had taken place.

The team ultimately secured permission to photograph the ship for modeling in a bay in the Netherlands, where it came in to dock for just a few hours. Despite the severe time constraints, a local photographer, Thomas Van Damme, skilled in the art of taking the essential imagery, was able to shoot the necessary material. But there was still one large problem: If a viewer looked over the sides of the boat using the imagery as captured, they’d see a Dutch bay rather than the arctic fjord in which NASA had conducted its research.

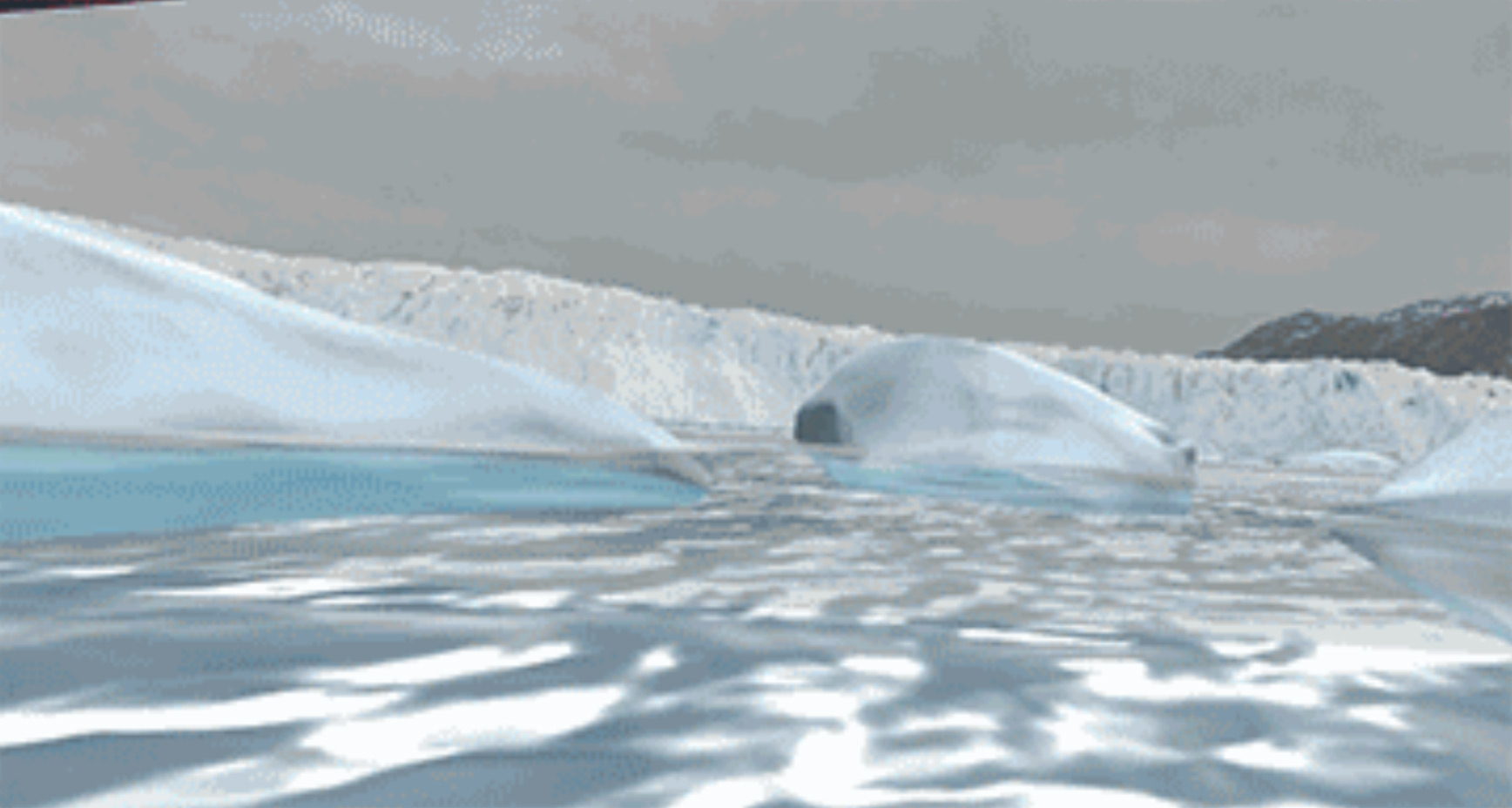

To recreate the fjord in which the vessel had been anchored, the team undertook a highly innovative combination of the 360° footage captured on site with 2D images taken by the field producers. The result was a dimensionalized version of the scene in which the 3D model of the boat could be placed. The team also used NASA maps to pinpoint the vessel’s exact location during the trip. The result is a breathtaking and accurate rendition of the environment, with the single caveat being that the icy rocks surrounding the fjord are slightly less clear than if they had been captured via photogrammetry.

Below are examples of the various techniques that were used.

Dimensionalized 360° Video

As noted previously, the main challenge with Greenland Melting was creating a consistent volumetric experience, despite the fact that some of the exterior scenes were only captured in 360° video. For the scene in front of the research modules (below), the team found a way of adding volume to 360° video, extrapolating information from the original footage to add depth to the rocks and snow.

The source footage was dimensionalized using a tool called ZBrush. The result allows a viewer to walk around on a 3D surface from which rocks protrude. The original 360° video is projected as a sky dome that meets the flat surface at the horizon.

The original 360° video is pictured above, with the final volumetric scene below.

After the terrain had been dimensionalized, some elements—the research pods behind the scientists—still had to be recreated using CG modeling in order for the environment to have consistent parallax qualities. The final scene combines 360° video (of the sky), a CG model (of the research pods), and volumetric 8i capture (of the scientists).

Additionally, 3D data visualizations were layered over the volumetric environments, with dimensional graphics helping to illustrate and articulate the various factors scientists have documented as accelerating the melting process. For example, in one sequence, viewers can lower their heads to gain an underwater view, in which 3D colored arrows chart the flows of warm water that are eating away at the glaciers from below.

360° Video Backdrops

To convey the scale of Greenland’s ice sheets, the team wanted to include a flyover scene shot from a helicopter. But in 360° video, extended tracking shots tend to induce motion sickness. The solution was to create a CG model of the interior of the helicopter and embed it in the center of the video. The viewer’s ability to move around this space alleviates the tension between visual and inner-ear cues that usually generates nausea, and thus allows a more comfortable appreciation of the scene.

The various Greenland environments were built using a complex combination of techniques and sources, and yet appear seamless and realistic. To avoid misleading the audience, the team chose to foreground the nature of the process by using a text card at the beginning of the piece.

Animated Elements

Recreating environments using CGI raised questions about what elements (if any) could be layered into these spaces without misleading the audience. For example, much of NASA’s research took place beneath the water’s surface, but how could the team accurately recreate the ocean floor without having seen it? Ultimately, in order to illustrate the researchers’ key finding—the way that warm ocean water is eroding the glaciers from beneath—the team used NASA’s bathymetry data to create a CG model, which illustrated the flow of the water using animated arrows. The scene’s “infographic” quality reduces the risk of misleading viewers into thinking they are seeing actual underwater footage.

This animation came with another key challenge: How would viewers know to look beneath the ocean surface to see it? The team decided that when the scene opened, viewers would find themselves submerged in the water up to their waist; shortly after, a narrator suggests the viewer “try bending down to take a look” below.

Animated Photogrammetry

As a 3D composite of still images, photogrammetry is generally, by definition, static. But in Greenland Melting, we were able to animate individual photogrammetry models to great effect. Above, NASA scientist Josh Willis ejects a data-gathering probe into the water.

This was done through scanning the instrument on its own at Emblematic and carefully cleaning up the data to make sure the reproduced model was accurate.

Filming Interviews

In order for NASA researchers Eric Rignot and Josh Willis to appear 3D and guide viewers through the story, they needed to be filmed at 8i’s studio in L.A., where interviews are captured using more than 40 synced cameras. The process creates massive amounts of data, making it both financially prohibitive and technologically complicated. As a result, interviews cannot run longer than 40 minutes. And because the material cannot be edited down using traditional techniques such as cutaways, each piece of footage used in a final project must be pulled from one continuous take. These technical limitations proved particularly challenging in Greenland Melting. The researchers were used to speaking about their experiments in scientific terms that can be difficult for a broader audience to absorb. Because the team would not have the option of editing answers for length and clarity after the fact, they had to prepare Rignot and Willis beforehand, working with the scientists to achieve usable, comprehensible responses before and during the interview itself. It took rigorous preparation and directing to ensure the interviewees communicated in a clear and concise way without altering the meaning of their statements.

In addition, the tense in which the scientists spoke during the interview was carefully considered in an effort to signal to viewers that the interviews had not actually taken place in the various environments in which the 3D models appeared. Moreover, Josh Willis felt it was imperative to be reproduced inside the 3D model of the NASA research plane wearing his jumpsuit. While this gives the illusion that Willis was filmed while inside the plane, it seemed appropriate to honor his request.

Greenland Melting marked a huge technical leap forward from After Solitary. The piece ultimately incorporated multiple characters captured using videogrammetry; high-resolution photogrammetry captures of both interiors and exteriors; complex combinations of formats, including 360° video; and a multilayered, 3D data visualization. Along with these technical advances came additional editorial challenges, specifically in recreating scenes, adding CG and 3D elements, and maintaining journalistic integrity and transparency.

Watch Greenland Melting in 360° video:

The Research

Exploring the Role of Virtual Reality in Journalism

While researchers have demonstrated several effects of virtual experiences, we know far less about how users interact with immersive journalism. Past research has found that virtual experiences can improve a surgeon’s skills during real operations, change the outcome of negotiations, and increase pro-environmental and pro-social behavior (Ahn, Bailenson, & Park, 2014; Gehlbach et al., 2015; Rosenberg, Baughman, & Bailenson, 2013; Seymour et al., 2002).

Researchers have attributed these effects to VR’s ability to evoke presence, encourage perspective taking, and give participants a sense of being in control of their environment. This ability to give users a chance to experience a new perspective, and the consequences of taking on that perspective, are especially significant for journalistic content. Journalism serves multiple purposes, including the accurate informing of the public about current issues and the framing of public conversations to facilitate active civic participation (Tofel, 2014).

By placing users within specific events and giving them a degree of agency, immersive VR could encourage the creation of new emotional connections between viewers and the events being depicted (Gajsek, 2016). Studying the unique immersive characteristics of VR is an important step toward understanding how this new technology compares to linear media in informing and engaging audiences on important social issues.

In After Solitary and Greenland Melting, FRONTLINE and Emblematic explored new ways to use VR to draw audiences into journalistic content. Both pieces capitalized on VR’s potential to give audiences a chance to visit unfamiliar places and perspectives. By placing users at the center of the story and giving them a degree of agency, virtual experiences upend traditional methods for telling journalistic stories and encourage a closer emotional connection to the events depicted.

In spring 2017, University of Southern California’s Media Impact Project (MIP) partnered with Emblematic Group and FRONTLINE to evaluate After Solitary and Greenland Melting. Both pieces were developed for use in room-scale VR as well as 360° and immersive 360º video. The goal of the research was to investigate participants’ responses to a journalistic experience in virtual reality. MIP set out to answer the following questions:

- What is the general viewer response to a VR journalism experience?

- What (if any) differences are there between viewing the same content in room-scale VR and less immersive technologies (e.g., 360º video, 2D video), especially in terms of people’s experience, knowledge, attitudes and intended future behaviors?

To test these questions, MIP recruited research participants, randomly assigned them to experience the stories in room scale and other platforms such as 360º video and 2D video, and, through surveys and interviews, captured what the participants thought, felt and were likely to do in response to these stories.

Here is a brief overview of what MIP found:

After Solitary Key Findings:

- After Solitary’s key success was in using the virtual space to connect participants with Kenny’s physical state and emotional journey.

- After Solitary inspired interest in VR journalism.

- Room-scale VR was the most effective platform for creating a sense of spatial presence.

- It is not clear if attitude and behavior changes differ by platform; all the participants’ self- reports indicated more knowledge, interest and intent to take actions.

- Medium matters: Participants using room-scale VR focused on their own perspective and experiences, but participants who used 360º video took the perspective of outsiders looking in and commented on details of Kenny’s story.

Greenland Melting Key Findings:

- Greenland Melting’s key success was in using the virtual space to demonstrate specific concepts by using time lapse or by placing users in physically impossible perspectives to make visual comparisons.

- Participants preferred the room-scale VR experience more and felt more spatial presence using it, even though the video was easier to use.

- Participants who experienced the VR version first were more likely to report an emotional response to the material, including being “unsettled,” “concerned” and “frightened.” Other changes in attitudes or behavior, however, did not follow a clear pattern.

- Compared to participants in the 2D version, VR participants did not do as well on the knowledge questions. Because participants were absorbed in exploring the virtual space, they had fewer resources to process the facts.

More research is needed to align the affordances of virtual reality platforms with the needs of users, and the goals of journalists and creators.

MIP offers the following recommendations to those pursuing VR journalism:

- Room-scale VR is the most effective way to create a feeling of “being there.” For environments with unique spatial characteristics, it creates that feeling to a greater degree than regular video, or even Immersive 360º video. However, the novelty of the medium creates incentives to explore the space rather than to absorb information, and provides enormous potential for distraction from complex narratives or information-dense sequences. Balancing these characteristics is the key to developing journalistic content for this medium.

- If participants in virtual reality can control where to look, but cannot interact with objects in the environment, they have “presence” but not total “agency”; in other words, they have a limited ability to influence the environment. Their role as an active viewer must be leveraged to reward curiosity about the environment. Designing with an eye toward the freedom to explore and discover information, rather than having informational goals and user agency working at cross-purposes, is the challenge of the medium.

- Participants in immersive experiences are not yet familiar with the meaning of editing conventions, so it is still important to clarify the “rules” of the environment. Visual cues about spatial environments, like where the horizon is or where the walls of a room meet, or using controllers to represent a participant’s hands, can be used to help participants stay oriented between scenes. Spatial cues in audio input should also be consistent. Unusual spatial positioning or movement should not be deployed alongside crucial informational content in the event that the participant has an adverse physical reaction, or is too distracted by the unfamiliar experience to recognize, encode and retain information. For sequences that integrate significant movement into the experience, mechanisms to detect non-participation and prompting or alternative choices should be provided.

- Having a character in a virtual experience to provide guidance and context for information is extremely valuable. Although participants noticed artifacts of the photogrammetry process and wanted each figure’s appearance to be more naturalistic, the benefits outweigh the costs and provided some of the most the most striking moments in both After Solitary and Greenland Melting.

- Improving technical and narrative aspects that contribute to or interfere with immersion could improve some outcomes. For example, using the wireless controllers to trigger the next scene, or tracking an individual’s gaze and creating variations in the execution of the content sequences based on attention, could improve participants’ ability to stay with the flow of information and not feel like they were “missing out.”

- VR experiences absorb users’ attention for short, intense periods of time. These experience inspire users to seek more information afterward, but is not yet known whether it is the most effective medium to commit facts to memory, as the novelty of the spectacle can be distracting.

- New users appreciate VR experiences and are inspired to look for more content after using it, but they do not have access to hardware in everyday life. Distribution remains a challenge. Live events are an effective way to build excitement for VR experiences or capture gatekeepers’ attention, but Web-based, sharable content is still the bulk of any piece’s audience. New efforts in WebVR distribution are underway, but, at the time of their production, these efforts were not yet technologically advanced enough to support playback of Greenland Melting or After Solitary.

VR Journalism Guiding Principles

Virtual reality journalism is an emerging genre, practiced by innovative storytellers who regularly encounter new ethical and editorial issues, as demonstrated in the case studies above. These guidelines are informed by the experiences and insights of these early practitioners, who have negotiated some of the thorniest issues presented by this immersive medium to date. They are not intended to be comprehensive, nor could they be. They are based upon existing, widely adopted ethical standards and practices from other media, and will sound familiar to journalists who have worked for reputable news organizations. Just as with other media, journalists working in VR are encouraged to seek guidance and feedback on these issues from senior editorial managers and colleagues when ethical issues arise.

VR is a powerful medium, combining visual, aural and physical material to create an intensely immersive, informative and emotional experience. Producers should always consider this extraordinary potential impact and strive to use it in a way that satisfies the traditional standards of journalism: accuracy, fairness and transparency.

Accuracy

- As in all journalistic media, the core value of VR journalism is accuracy. The layers of visuals and sounds comprising a 3D environment must present the viewer with an accurate representation of reality.

- In creating a 3D environment, producers should consult multiple sources in recreating such environments to ensure the original space is accurately represented.

- As a general rule, while VR producers must often adjust images in a 3D environment for scale and proportionality, elements within the images should not be added, subtracted or rearranged in a way that is not supported by the facts. If an element is placed into an environment for storytelling purposes that does not appear in the original space, producers must make it clear to viewers that this has taken place.

- Natural sound and sound effects are often used to create a more authentic and immersive 3D experience. Sound should only be added or edited if it helps convey an accurate understanding of the scene or story to the viewer.

- Music should be appropriate and in keeping with the narrative. As with other sound effects, VR producers should guard against using music that will create an impression for the viewer that is either distorted or inaccurate.

- Current procedural and technological limitations make it challenging to capture every scene or element within a VR story in 3D. As a result, the use of animation, re-creation and dramatizations can be very effective and, in many cases, necessary. In some instances, viewers may be confused as to the authenticity of these elements. In any situation that presents a risk of confusion or misleading viewers, they should be clearly labeled or otherwise signaled.

Fairness

- As with all ethical journalism, VR producers must treat their subject matter and the people featured in the piece fairly in order to ensure the credibility of the report.

- Early research suggests that viewers may identify more strongly with perspectives presented in VR than to more traditional, 2D media. While fairness does not demand that equal time be accorded to all conflicting viewpoints or opinions, it does require the acknowledgment and responsible statement of significant conflicting views. In presenting alternate viewpoints, producers should keep in mind that research suggests viewers may not register some forms of data, including titles and voice-overs, in a VR experience, as a result of being preoccupied by the visceral, physical sensation of “being there.” As viewers become more accustomed to the medium, this effect may diminish, but more research to determine whether this is indeed the case is required.

- While a viewer’s agency within a walk-around 3D environment can create a more immersive experience, the audience may miss key facts or pieces of information if they choose not to explore or interact with certain story elements. Producers may find it helpful to use tools such as text, sound and light to alert viewers to such key elements, and/or find ways to ensure they are communicated regardless of a viewer’s choice to interact.

- Because of the visceral impact of a virtual reality experience, producers should consider providing appropriate warnings if the production includes scenes that may be shocking, gruesome, explicit or sensitive. And, as with any kind of journalism, producers should be sensitive to the potential impact of the story on people featured in it or on those close to them; e.g., victims of violence or their families. In many cases, producers and editors may find it appropriate to blur such images or to depict them in a stylized way.

Transparency

- Because VR can give viewers the agency to explore a story firsthand, they may be unaware of the extent to which a piece has been intentionally directed and designed. In an effort to address this, producers should be transparent about their production techniques and how those techniques impact the perception of reality or recreations. In all cases, a guiding principle should always be to avoid misleading viewers. Many news organizations provide stories accompanying VR productions to explain to viewers how the piece was produced and answer likely questions about the authenticity of the story. Alternatively, these disclosures can be provided by whatever means will ensure that viewers have a clear understanding.

- Current technological limits require 3D interviews to be succinct and that each bite of sync used is from one continuous take. As a result, producers must sometimes prep interviewees more extensively than they would in more conventional mediums. In doing so, producers should have a goal of helping an interviewee communicate clearly and concisely, without changing the meaning of their statements. In cases in which significant prep work has been required for an interview, this process should be disclosed to viewers.

About Us

FRONTLINE, U.S. television’s longest running investigative documentary series, explores the issues of our times through powerful storytelling. FRONTLINE has won every major journalism and broadcasting award, including 89 Emmy Awards and 20 Peabody Awards. Visit pbs.org/frontline and follow us on Twitter, Facebook, Instagram, YouTube, Tumblr and Google+ to learn more. FRONTLINE is produced by WGBH Boston and is broadcast nationwide on PBS. Funding for FRONTLINE is provided through the support of PBS viewers and by the Corporation for Public Broadcasting. Major funding for FRONTLINE is provided by the John D. and Catherine T. MacArthur Foundation and the Ford Foundation. Additional funding is provided by the Abrams Foundation, the Park Foundation, The John and Helen Glessner Family Trust, and the FRONTLINE Journalism Fund with major support from Jon and Jo Ann Hagler on behalf of the Jon L. Hagler Foundation.

Emblematic Group creates award-winning immersive content powered by proprietary technology. Founded in 2011 by VR pioneer Nonny de la Peña, Emblematic has been a leader in volumetric storytelling and one of the world’s premiere producers of virtual, augmented and mixed reality. Hunger in Los Angeles was the first ever VR documentary to be shown at the Sundance Film Festival in 2012. Since then, the company has built a critically acclaimed body of work that has included tracking the chaos of the Syrian civil war; capturing the tension of a wheel change during the Singapore Grand Prix; and conveying the scope and scale of climate change. Emblematic partners with organizations including Google, Mozilla, The Wall Street Journal and The New York Times to create both tools and content that enlighten, empower and educate audiences.

Acknowledgements

This project was funded by the John S. and James L. Knight Foundation through a grant to investigate best practices and the ethics of immersive virtual reality journalism. We thank Knight Foundation for its generous support.